baseline 1 2 3 4 5 6 7 8 9 10 11 12 13 __global__ void device_copy_scalar_kernel (int * d_in, int * d_out, int N) { int idx = blockIdx.x * blockDim.x + threadIdx.x; for (int i = idx; i < N; i += blockDim.x * gridDim.x) { d_out[i] = d_in[i]; } } void device_copy_scalar (int * d_in, int * d_out, int N) { int threads = 128 ; int blocks = min((N + threads-1 ) / threads, MAX_BLOCKS); device_copy_scalar_kernel<<<blocks, threads>>>(d_in, d_out, N); }

简单的分块拷贝。

通过cuobjdump -sass executable.得到对应的标量copy对应的SASS代码

1 2 3 4 5 6 IMAD R6.CC, R0, R9, c[0x0 ][0x140 ] IMAD.HI.X R7, R0, R9, c[0x0 ][0x144 ] IMAD R4.CC, R0, R9, c[0x0 ][0x148 ] LD.E R2, [R6] IMAD.HI.X R5, R0, R9, c[0x0 ][0x14c ] ST.E [R4], R2

(SASS不熟悉,请看SASS一文)

其中4条IMAD指令计算出读取和存储的指令地址R6:R7和R4:R5。第4和6条指令执行32位的访存命令。

Vector way1: CUDA C/C++ standard headers 通过使用int2, int4, or float2

比如将int的指针d_in类型转换然后赋值。

1 2 3 reinterpret_cast<int2*>(d_in) (int2*(d_in))

但是需要注意对齐问题,比如

1 reinterpret_cast<int2*>(d_in+1 )

这样是非法的。

Vector way2: structures 通过使用对齐的结构体来实现同样的目的。

1 2 3 4 struct Foo {int a, int b, double c}; Foo *x, *y; … x[i]=y[i];

实际修改LD.E.64 执行for循环次数减半,注意边界处理。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 __global__ void device_copy_vector2_kernel (int * d_in, int * d_out, int N) { int idx = blockIdx.x * blockDim.x + threadIdx.x; for (int i = idx; i < N/2 ; i += blockDim.x * gridDim.x) { reinterpret_cast<int2*>(d_out)[i] = reinterpret_cast<int2*>(d_in)[i]; } if (idx==N/2 && N%2 ==1 ) d_out[N-1 ] = d_in[N-1 ]; } void device_copy_vector2 (int * d_in, int * d_out, int n) { threads = 128 ; blocks = min((N/2 + threads-1 ) / threads, MAX_BLOCKS); device_copy_vector2_kernel<<<blocks, threads>>>(d_in, d_out, N); }

对应汇编可以看出

1 2 3 4 5 6 IMAD R10.CC, R3, R5, c[0x0 ][0x140 ] IMAD.HI.X R11, R3, R5, c[0x0 ][0x144 ] IMAD R8.CC, R3, R5, c[0x0 ][0x148 ] LD.E.64 R6, [R10] IMAD.HI.X R9, R3, R5, c[0x0 ][0x14c ] ST.E.64 [R8], R6

变成了LD.E.64

实际修改LD.E.128 执行for循环次数减半,注意边界处理。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 __global__ void device_copy_vector4_kernel (int * d_in, int * d_out, int N) { int idx = blockIdx.x * blockDim.x + threadIdx.x; for (int i = idx; i < N/4 ; i += blockDim.x * gridDim.x) { reinterpret_cast<int4*>(d_out)[i] = reinterpret_cast<int4*>(d_in)[i]; } int remainder = N%4 ; if (idx==N/4 && remainder!=0 ) { while (remainder) { int idx = N - remainder--; d_out[idx] = d_in[idx]; } } } void device_copy_vector4 (int * d_in, int * d_out, int N) { int threads = 128 ; int blocks = min((N/4 + threads-1 ) / threads, MAX_BLOCKS); device_copy_vector4_kernel<<<blocks, threads>>>(d_in, d_out, N); }

对应汇编可以看出

1 2 3 4 5 6 IMAD R10.CC, R3, R13, c[0x0 ][0x140 ] IMAD.HI.X R11, R3, R13, c[0x0 ][0x144 ] IMAD R8.CC, R3, R13, c[0x0 ][0x148 ] LD.E.128 R4, [R10] IMAD.HI.X R9, R3, R13, c[0x0 ][0x14c ] ST.E.128 [R8], R4

变成了LD.E.128

summary

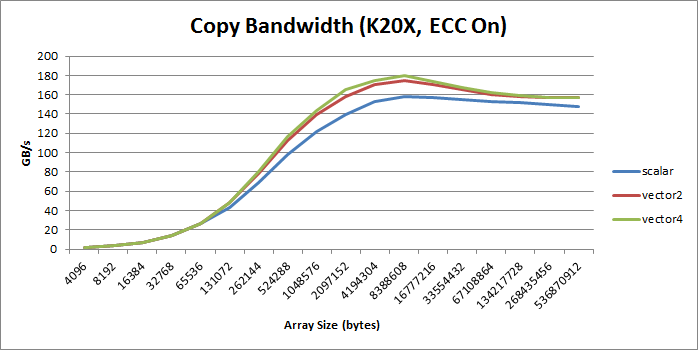

(个人感觉,提升也不大吗?也没有两倍和四倍的效果)

绝大部分情况,向量比标量好, increase bandwidth, reduce instruction count, and reduce latency. 。

但是会增加额外的寄存器(SASS里也没有看到??)和降低并行性(什么意思???)

参考文献 https://developer.nvidia.com/blog/cuda-pro-tip-increase-performance-with-vectorized-memory-access/#entry-content-comments